Author: Paolo Cesarini, Chair of the Executive Board, European Digital Media Observatory (EDMO)

This text has been published as part of the first edition of the new monthly EDMO Signals & Noise newsletter. Sign up here to receive future editions directly to your inbox.

From AI-generated “parallel realities” to electoral manipulation and competing geopolitical narratives, the disinformation landscape is rapidly evolving, with three trends shaping up simultaneously over the past month.

1. War-related disinformation as a dominant and emotionally charged driver

The conflict in the Middle East has provided a hotbed for 39% of all disinformation detected in March, confirming a well-known dynamic: disinformation tropes shift in synch with audience picks by focusing on events that maximise public attention. Taking into account pre-existing and persistent narratives around the conflicts in Ukraine and Gaza, the aggregated share of war-related disinformation has exceeded 50%, which suggests an overall dominance of misleading or fabricated content tailored to provoke strong emotional reactions – fear, outrage, or solidarity – across wide, international audiences. Disinformation thus intersects geopolitical strategies, influencing public opinion far beyond national borders.

The systematic use of AI-generated visuals that simulate battlefield events or distort military outcomes has contributed to such a spike. Pro-Iran and pro-Israel/US networks alike, both domestic and non-European, have deployed synthetic content to construct partisan narratives, while opportunistic actors have exploited the same material purely for monetisation purposes, leveraging algorithms that reward engagement and popularity. The scarcity of verified information – due to war censorship – has further amplified this vulnerability, creating fertile ground for speculation and falsehoods. Yet not all disinformation was AI-generated: a large share still consists of recycled or decontextualised authentic footage, repurposed to fit current events, reflecting a hybrid ecosystem where old and new manipulation techniques coexist.

Moreover, the noise surrounding military operations has spilled over several information domains, cutting across European security, diplomatic relations between NATO allies, the Vatican’s posture towards the war, and the economic consequences of oil and gas supply disruptions. Just like during Covid, conspiracy theories about “energy lockdowns” are thriving, and real-world impacts have been observed in Romania – where the increase of prices at the pump has re-ignited anti-government tropes – and in rural Ireland, where narratives linking fuel crisis, immigration and economic hardship have sparked street protests.

2. Electoral disinformation: systemic and transnational

With four national elections taking place in Denmark, Slovenia, Hungary and Bulgaria in the span of just one month, EDMO monitoring has shown that electoral disinformation in Europe is not episodic or fringe, but systemic, technologically amplified, and deeply entangled with political power struggles.

Firstly, all four electoral campaigns were exposed to disinformation and FIMI, albeit in different forms and to different degrees. As expected, the Hungarian (by far) and Bulgarian elections were the most exposed. But no country was immune. While the situation in Denmark was generally calm and without major incidents, a last minute cyberattack by a pro-Russian hacker group and evidence of LLM grooming by networks of Russian websites have come to show that no electoral context is completely risk-free. Similarly, the Slovenian electoral campaign did not prompt major alerts until a major, last minute hack-and-leak operation involving an Israeli agency caused the Slovenian PM Robert Golob to call on Ursula von der Leyen to investigate what he considered a “hybrid attack” to EU democratic processes.

Secondly, the use of delegitimization narratives – claims that elections are manipulated, stolen, or controlled by foreign powers – has become a recurring theme across different campaigns. Elections are framed as unfair, manipulated, or externally controlled, while legitimate actions, such as media scrutiny or law enforcement, are reframed as authoritarian overreach. This “cognitive shaping” is aimed less to persuade than to erode trust in democracy over time.

Thirdly, electoral disinformation, especially in Hungary, was heavily marked by fear-based geopolitical narratives. Opposition figures have been portrayed as agents of foreign interests, accused of serving Brussels or Kyiv and dragging the country into conflict, while EU institutions and NGOs were depicted as external actors interfering in matters of national sovereignty.

Fourthly, disinformation has been weaponised through increasingly sophisticated manipulative techniques, involving inauthentic coordinated networks and covert political ads on large platforms such as Meta and TikTok, a strategic use of blind spots via less regulated platforms such as Telegram, and AI-generated content aimed at discrediting opposition figures. These tactics were not random; they were often carefully staged to mimic investigative journalism, giving false claims a veneer of legitimacy.

Even when electoral outcomes shift – as suggested by Viktor Orbán’s political setback – the informational damage persists. False narratives, once embedded, continue to circulate within a weakened media ecosystem, shaping public perceptions or even rebouncing beyond national borders (e.g. Hungary-Romania tensions). Disinformation’s impact is cumulative and durable, outlasting the events that generate it.

3. The cumulative effect of Gen-AI and platform economy

A central trend is the industrialisation of disinformation through artificial intelligence. AI-generated content – ranging from fabricated images to fully synthetic narratives – is now cheap, to produce, scalable, and increasingly indistinguishable from authentic material. In March, it represented 20% of detected disinformation across all topics.

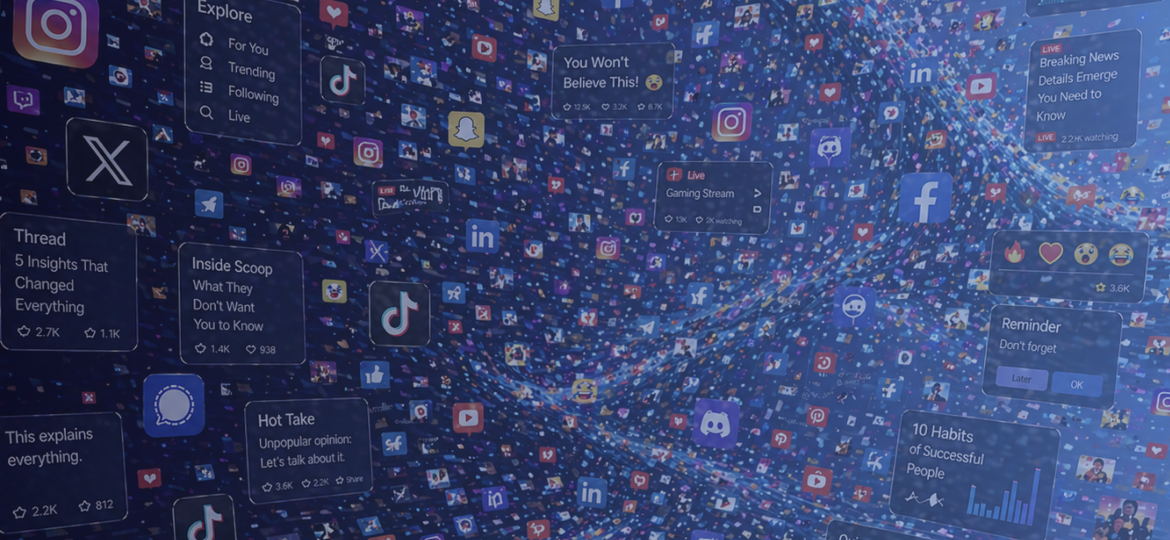

Closely linked is the rise of “AI slop” – low-quality, high-volume content designed to flood digital platforms – underscoring the role of economic incentives: content farms and opportunistic actors exploit platform algorithms for revenue, inadvertently or deliberately amplifying political manipulation. Instead of persuading through credibility, they overwhelm users with repetition and noise, making it harder to distinguish truth from falsehood. The strategy echoes older manipulation techniques like flooding and astroturfing but operates at unprecedented scale using off-the-shelf workflow automation solutions, often provided by venture capital-supported start-ups (e.g. Doublespeed), which integrate tools managing accounts, distributing content, and simulating engagement. By saturating feeds, disinformation actors degrade the overall information environment, eroding trust not only in specific facts but in the very possibility of reliable knowledge.

Drowning the Democratic Commons

Audiences are immersed in “parallel realities” where fabricated feel-good stories may overlap with conspiracy content (particularly around sensitive topics such as the war in Ukraine or EU governance), blurring the lines between state-sponsored propaganda, partisan domestic messaging, and profit-driven clickbait. This points to a shift in the psychological dimension of disinformation. Apart from purely opportunistic operations, politically motivated campaigns are increasingly aimed at producing confusion, cynicism, and disengagement, rather than simply convincing audiences of specific falsehoods. By flooding user feeds with contradictory or sensationalist content, they foster an environment where all information appears suspect and no source reliable. This erosion of shared reality has arguably become one of the most significant challenges for democratic societies, where informed debate depends on a baseline of commonly accepted facts. The European Digital Media Observatory operates precisely at this fault line, working to uphold information integrity and sustain democratic discourse.