AI (and Μore) Shaping Gulf War Perceptions in Europe

ON THE RISE

The war that the United States and Israel are waging against Iran, according to EDMO’s preliminary assessment, has been the main topic of disinformation in Europe in recent weeks*. Different phenomena are contributing to the circulation of a significant amount of false or misleading content, especially across various social media platforms (notably X, TikTok, Meta, and YouTube) but also on chat apps, traditional media, online or offline.

AI generative technologies are playing a major role in shaping how millions of citizens in Europe perceive what is happening. Social media platforms are flooded with AI-generated videos and images that allegedly portray events in the Gulf region and, more broadly, the Middle East.

Pro-Iran accounts, for example, are spreading countless AI-generated videos and images that exaggerate the success of the Islamic Republic’s retaliation against Israel and the United States. Pro-Israel accounts, on the other hand, are sharing for example AI-generated content that mocks the Iranian army and its leadership.

Many accounts share AI-generated videos and images not because they support one side of the conflict or the other – sometimes they portray real events – but because they aim to exploit social media platforms’ monetization mechanisms. Shocking, extreme, violent and polarizing content that appeals to people’s deepest emotions (fear, hatred, sympathy, anxiety, etc.) easily goes viral, both because platform algorithms tend to promote such content and because it is inherently engaging. The same goes for content (news, videos, images etc.) targeting specific polarizing entities and public figures, such as Israel, the United States, Iran, Trump, Netanyahu, Muslims, Jewish people and so on.

In addition to these already significant issues, AI-powered chatbots are increasingly emerging as active spreaders of false information. On X, in particular, Grok has become a source many users turn to for verification, while at the same time consistently spreading disinformation across a range of topics, both by presenting false claims as true and by dismissing accurate information as false.

But the flowing disinformation it’s not all AI-generated: a lot of real but old/unrelated content is circulating with misleading captions to frame it as connected with the ongoing war in Iran. Again, most likely, for propaganda or monetization purposes and exploiting the shortage of real content due to military censorship, which limits independent journalists to report from the war zones. The same happens with unfounded, false or misleading news that do not have any confirmation.

Social media platforms have therefore become spaces where people still seek information during crises, but where information is heavily polluted by actors spreading disinformation and propaganda for various reasons: to make money, to support state actions (on all sides), and to pursue national political goals (extremist forces often exploit such situations to foster xenophobic, Islamophobic, and/or antisemitic sentiments).

Social media platforms appear to be aware of at least some of these issues, which largely depend on the platforms’ algorithms, policies and monetization systems. For example Nikita Bier, head of product at X, said on March 3 that users who post AI-generated videos of an armed conflict, without adding a disclosure that it was made with AI will be suspended from Creator Revenue Sharing for 90 days. But experts pointed out that even this fix is unlikely to solve the problem.

All this happens against a backdrop of contradictory messaging from both the Iranian and the US-Israeli political leaderships, notably on critical issues such as the impact of oil prices shocks on the global economy. In case of protracted blockade of the Strait of Hormuz, rampant disinformation could deeply influence public perceptions around the evolution and potential global impacts of the conflict, while favoring speculative operations on highly volatile markets and exposing households’ savings to unpredictable market fluctuations.

*The precise quantitative monitoring, carried out in accordance with EDMO’s methodology, will be available in the next EDMO monthly brief, that will be published mid-April 2026.

ZOOM-IN

The war in Iran has prompted an unprecedented flood of AI-generated videos worldwide, exacerbating informational uncertainty around the strategic goals, targets, material consequences and socio-economic impacts of the ongoing military operations. But it has also prompted an array of AI-generated and non-AI false narratives targeting European interests and speaking to European audiences.

Where did the drone that struck Akrotiri come from?

On the night of 1 to 2 March 2026, a drone struck RAF Akrotiri base in southern Cyprus, causing limited damage with no reports of injuries. In the same hours and in the following days, other drones allegedly heading towards British bases were intercepted.

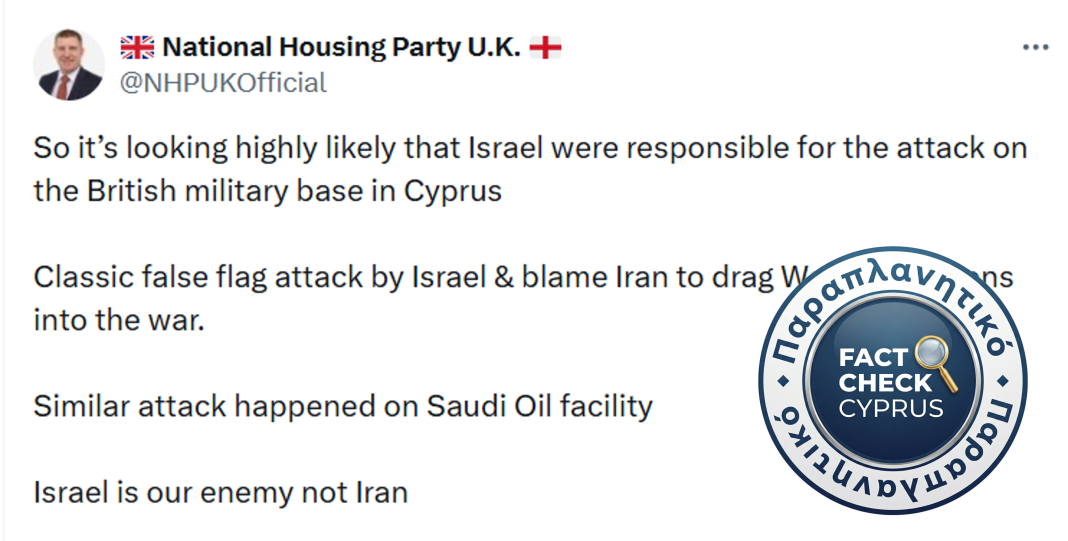

Pending security investigations, a flow of social media posts on X and other platforms claimed that the drone attack was a “classic Israeli false flag attack,” designed to blame Iran and drag Western countries into war. The claim appeared in an article published in a Greek conspiracy website and quickly gained traction on X. A significant contribution to its spread came from the extremist National Housing UK account.

However, according to MedDMO analysis, the evidence so far indicates that an Iranian-designed Shahed drone was used and that the primary focus of the investigations was on the Iranian-controlled Lebanese militia (Hezbollah) rather than Israel. There is no credible evidence that the attack on Akrotiri was an Israeli operation.

Spoofing attack using a fake Keir Starmer post amidst spats between Trump and NATO partners

A viral social media post falsely claimed that UK Prime Minister Keir Starmer threatened to expel US forces from British bases within 48 hours if the United States left NATO. The fabricated message was widely shared across platforms like X, Facebook, Instagram, and Reddit, gaining millions of views. The hoax spread after President Donald Trump angrily criticized NATO members refusing to join the war, hinting at a possible US withdrawal. The post originated from a satirical Threads account that later admitted it was a spoof, highlighting how quickly fake posts can spread across different groups and social media platforms.

Ukrainian soldiers sent to the Persian Gulf?

Videos portraying AI ‘fake soldiers’ is trending on multiple platforms, according to an analysis by BENEDMO. This includes a wave of AI-generated videos on TikTok falsely portrays Ukrainian soldiers criticizing Ukraine’s cooperation with international partners against Iranian drones. These videos, spread through newly created accounts, promote a fabricated claim that “300 troops” were sent to the Persian Gulf to serve political interests and call for halting military mobilization if troops can be deployed abroad. Attributed to Russian propaganda, the campaign is aimed at convincing military and civil audiences that Ukraine’s defence capabilities are weakening and to stir public unrest. Ukrainian President Volodymyr Zelenskyy has emphasized that cooperation with partners strengthens air defence and helps prevent further escalation of the war. No verified figures about troop deployments have been publicly confirmed.

Soaring oil prices fuel opposition to the Romanian government

Triggered by attacks on oil infrastructure and disruption of traffic through the Strait of Hormuz, the fuel crisis has driven up prices at the pump around the world threatening households’ disposable income. The ARD opposition party in Romania has accused the government of failing to cap prices at the pump, contrary to Hungary and most European countries that would have allegedly taken timely measures to protect their citizens. The argument, which has been proven factually false, may nevertheless reappear in other contexts or countries, leveraging raising fears of oil and gas supply crunch and increased market volatility.

ELECTION BEAT

Attempted hacker attack on Danish parties the day before the election

A pro-Russian hacker group, NoName057(16), launched cyberattacks on several Danish political party websites the day before the general election. The attacks were mainly DDoS (overload) attacks and briefly disrupted some sites. The overall impact was limited and at least one party (the Danish Social Liberal Party) successfully repelled the attack. Danish authorities had already warned such interference was likely, and the group has a history of targeting Denmark, with suspected links to the Russian state.

Slovenia flags alleged foreign interference ahead of elections

Slovenian Prime Minister Robert Golob has called on Ursula von der Leyen to investigate alleged election interference by the Israeli intelligence firm Black Cube, as reported by this Politico article. Leaked recordings, reportedly linked to illegal surveillance, surfaced shortly before the March 22 vote and appeared to tie Golob’s government to corruption.

The Prime Minister warned that this represents a broader “hybrid threat” to EU democratic processes, and urged the EU to assess the case under the European Democracy Shield. No major prior incidents were reported during the campaign, and a more in-depth analysis will be provided in the coming days by the Adria Digital Media Observatory (ADMO), the EDMO hub covering Croatia and Slovenia.

Hungary: AI-driven disinformation and Russian bots

The new weekly digest by Lakmusz highlights emerging disinformation trends ahead of Hungary’s April elections, including limited activity by the Russian-linked Matryoshka bot network and the spread of misleading political content online. While the network has circulated fabricated videos targeting the campaign, their reach appears so far limited and potentially artificially amplified. False narratives such as a staged banner linking Péter Magyar to Volodymyr Zelenskyy, and misleading reporting on rally attendance, have circulated in the media ecosystem. The article also identifies a surge in AI-generated political content on platforms like TikTok and Facebook, with coordinated networks promoting pro-government narratives and attacking opposition figures.

GLOBAL PULSE

No, Pfizer vice president did not say Covid vaccines killed 17 million people

A viral social media post falsely claims that Michael Yeadon, described as a “vice president of Pfizer,” said Covid-19 vaccines caused 17 million deaths and that the pandemic never existed.

In reality, Yeadon was only vice president of a specific research division unrelated to vaccines and left the company in 2011. Since 2020, he has been known for promoting unfounded and pseudoscientific claims about Covid-19. The article debunks these statements, confirming that the COVID-19 pandemic is scientifically well-documented, that vaccines do not alter human DNA, and that there is no credible evidence linking them to mass deaths.

The “17 million deaths” figure comes from a widely discredited analysis that failed to establish any causal relationship between vaccination and mortality, while substantial research shows that Covid-19 vaccines have saved millions of lives globally.

ON A DIFFERENT NOTE

Disinformation is increasingly recognised as a strategic threat to businesses and the economy. The World Economic Forum (WEF) estimates that the global economy loses tens of billions of dollars each year to disinformation.

This edition draws in part on automated translation and reflects information available as of 25 March 2026; later developments may not be included