IN THIS EDITION

ON THE RISE

Weekly Watch of Emerging Disinformation Risks

AI Slop: How Greed Is Affecting Democracies

The journalist and technology expert Jack Brewster writes in his Substack newsletter that we braced ourselves for AI’s impact on elections, but instead “it has gone predominantly commercial. In fact, an entire industry has sprung up around synthetic content used to market products, manufacture fake audiences, and extract revenue from platforms that reward engagement whether or not a human is behind it.”

Even considering the unprecedented amount of content about the U.S./Israel’s war against Iran, or about the Hungarian elections, generated with AI for propaganda and manipulation purposes, it is quite evident that, behind the avalanche of AI-generated content now daily flooding social media feeds, the main driving force is less a desire to reshape societies (whether for better or worse) than a far more prosaic motive: greed. Legions of influencers without scruples use AI technology to create and disseminate synthetic content presented as real, leveraging users’ emotions to gain virality and exploiting social media platforms’ monetization mechanisms to turn virality into money. Behind these “slopfluencers”, as Brewster writes, “sits an industrial supply chain of coaches selling the course, operators running the accounts, and tool builders automating the posting”: people interested in profits, no matter the consequences.

Yet it is important to stress that this kind of AI-generated content, even if driven by commercial goals, may have an impact, perhaps largely unintended, on elections and more broadly on democracies. It can shape political attitudes, reinforce prejudices, and in the end influence democratic processes.

Among the examples cited by Brewster is the Instagram account known as “Rabbi Goldman,” which amassed roughly 1.5 million followers by packaging what it claimed to be Jewish financial wisdom, content that in reality recycled long-standing antisemitic conspiracy theories. Another example is Sri Lankan influencer Geeth Sooriyapura, who set up a network of Facebook pages that spread Islamophobic and racist AI-generated posts targeting British audiences, with the goal of generating revenue through advertising and traffic. On many occasions, coordinated networks have circulated AI-generated disinformation about ongoing wars, climate change, public health crises, and other highly sensitive issues. Each fabricated image or false post or misleading chat with a LLM contributes to a broader ecosystem of distortion.

The cumulative effect of this torrent of false and toxic content is not limited to isolated misunderstandings. It gradually alters – false content after false content, constant dripping wears away the stone – public perception of complex phenomena, ranging from economic stability to national security, from foreign policy to public health. In the end, democracy itself is affected, as public consensus on critical issues follows perceptions that are artificially shaped by synthetic realities that find little or no validation in the real world.

This leads to another crucial point: the main object of scrutiny should not be the so-called industry of “AI slop” itself, but the monetization systems that sustain it. The proliferation of deceptive synthetic content is not an accident of technology alone; it is a predictable outcome of platform policies that reward engagement without caring about “what” is engaging.

Even as the scale of the problem has become widely undeniable, social media companies continue to generate billions in revenue from the very ecosystems that enable the erosion of democratic trust, while refraining from meaningful action or investment that could significantly reduce the harm to societies. This raises a distressing question: is it really just about money? Or weakening societies and democracies is, in the end, the real long-term goal of at least some of the social media platforms?

A faint source of hope may nonetheless lie in the unintended consequences of this technological shift. As AI-generated content falsely presented as real becomes more infesting, the overall reliability of information encountered on current social networks may decline to the point that users no longer consider any information found there to be trustworthy. Reliable information will simply migrate elsewhere, to environments where editorial oversight and verification standards ensure credibility.

Of course, there is always the risk that people will abandon the very idea that reliable information exists at all. At that point, disinformation achieves its ultimate victory: not by convincing people of a specific falsehood, but by persuading them that truth itself is unattainable. This is a scenario that society as a whole (schools, media organizations, universities, as well as regulators and legislators) should do everything in its power to avoid.

ZOOM-IN

A Closer Look at Cases Detected By the EDMO Network

AI-powered influence is no longer experimental but operational. Systems such as Doublespeed industrialize online manipulation by combining AI text or image generation with workflow automation, including tools that manage accounts, distribute content, and simulate engagement. This creates a highly scalable astroturfing machine capable of bypassing platform safeguards by appearing like normal user activity. Defending against it requires focusing on system-level behavior, not just AI-generated content. Some recent analyses from the EDMO Network expose the pervasiveness of this emerging phenomenon and offer some practical tips for social media users to remain vigilant.

Behind Infinite Facts, Malta’s AI feel-good fake story generator

A Facebook page called “Infinite Facts” has gained over 23,000 followers by posting heartwarming stories about kindness and heroism in Malta. While these stories appear uplifting, the fact-check reveals that most are entirely fabricated using AI-generated text and images. Examples include fictional tales of grieving parents, heroic animals, and groundbreaking scientific discoveries, none of which actually occurred. The page mixes obviously false stories with more plausible or even real news, making it difficult for readers to distinguish facts from fiction. It also frequently features well-known public figures in exaggerated or invented scenarios to boost engagement.

The MedDMO article highlights how AI-generated “feel-good” disinformation can spread online, exploiting platform algorithms and blurring the line between truth and fiction online. It traces the page’s origins, noting it was created in 2022 under a different name, later rebranded multiple times before becoming “Infinite Facts.” Its content strategy evolved from generic global posts to Malta-focused stories, rapidly increasing its popularity. The primary motive appears to be “engagement farming”, creating emotionally charged, shareable content to maximize likes, comments, and followers. This can later be exploited for profit, for example through scams or monetization programs tied to social media engagement.

Another example of engagement farming can be found in AI-generated spatial content, which is easy to create, visually striking, sparks curiosity, and is widely shared. A recent article by Verificat highlights how AI-generated images of a strange object moving across two lunar craters, allegedly taken by Artemis II, were distributed on Facebook and Instagram by accounts bearing the “blue checkmark”. Such a checkmark is reserved to accounts that participate to Meta’s monetisation program – though verification doesn’t guarantee authenticity or credibility.

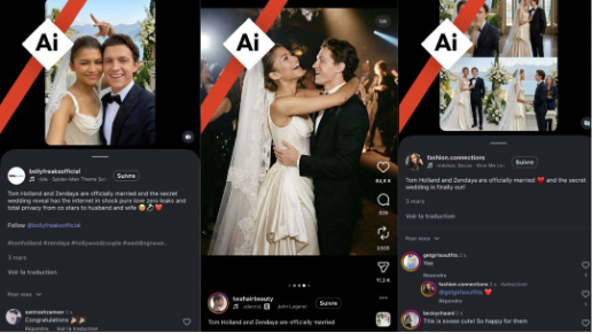

How to Spot an AI-Generated Image? The Classic Case of Zendaya and Tom Holland’s Fake Wedding Photos

The viral fake wedding photos of Zendaya’s and Tom Holland’s supposed secret wedding spread widely on social media in early March, attracting millions of likes and convincing many users they were real. Even Zendaya herself noted that “many people were fooled.”

The article from EDMO Belux uses this hoax as a case study to explain how to identify AI-generated images online. First, it stresses basic plausibility: a “secret” wedding would not realistically produce numerous high-quality photos from different angles. It also advises checking official sources, for instance by verifying the two Hollywood celebrities’ official accounts. Next, it recommends cross-checking content across multiple platforms and using reverse image searches to trace the origin and spread of images. In this case, the photos were linked to accounts known for systematically sharing AI-generated content. The article also highlights the importance of reading captions and comments, where clues like “AI-generated” labels may appear. Finally, it suggests using detection tools such as Hive Moderation, SynthID, or InVID-WeVerify. Overall, the piece emphasizes critical thinking and verification habits to avoid being misled by increasingly realistic AI-generated visuals.

Almost Everything Wrong: Google’s AI Summary Repeatedly Gives Incorrect Information About AI Images

The article examines the reliability of Google’s AI-generated summaries (“AI Overviews”), focusing on how they handle questions about AI-generated images and concluding that these summaries often produce incorrect, misleading, or inconsistent information. Faktabaari demonstrates its findings through practical tests, asking Google’s AI Overview to evaluate a range of images—some real, some AI-generated, and some already debunked online. The results show errors in the vast majority of cases: the system misclassifies images, gives uncertain or contradictory explanations, and sometimes presents speculation as a fact. This inconsistency is particularly problematic because users may assume that prominently displayed answers at the top of search results are reliable.

The article also highlights how the system lacks transparency. It does not clearly explain how conclusions are reached, nor does it always provide verifiable sources. This makes it difficult for users to assess the credibility of the information or trace it back to trustworthy origins. The article concludes by urging users to treat AI-generated summaries with caution, especially when assessing images. It recommends cross-checking information, using reverse image searches, and consulting reliable fact-checking sources before accepting claims as true.

ELECTION BEAT

Tracking electoral disinformation through EDMO Hubs

Telegram: Electoral Digital Blindspot

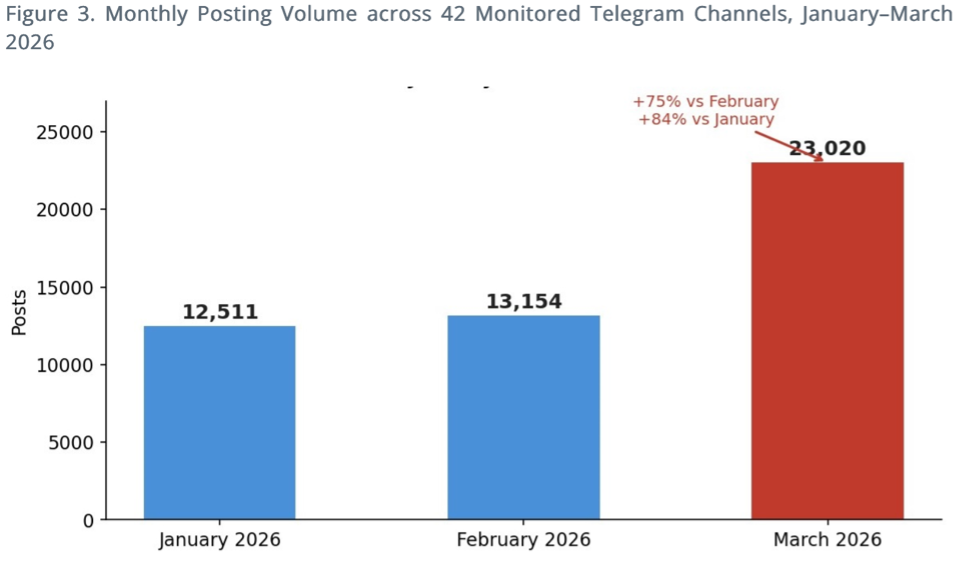

In this report by BROD, Telegram has emerged as a significant regulatory and analytical blind spot in the run-up to Bulgaria’s 19 April parliamentary election. The social media hosted a dense and highly active ecosystem of political content with limited transparency and oversight.

A new analysis of nearly 49,000 posts across 42 Bulgarian-language channels shows that more than half of all content circulated in coordinated, cross-channel clusters—often reworded to appear organic—reaching substantially larger audiences than isolated posts. Activity surged sharply in the final weeks before the vote, with a 75% increase in posting volume from February to March, indicating intensified pre-election mobilisation.

The data reveals how pro-Kremlin geopolitical narratives are systematically intertwined with domestic electoral messaging, including content linked to political actors, blurring the line between foreign influence and national political discourse. Despite this, Telegram falls outside the strictest obligations of the Digital Services Act, highlighting a critical enforcement gap around a platform that is demonstrably shaping the election-period information environment.

Bulgarian Elections: Cognitive Shaping to Erode Trust in the System

In the weeks preceding Bulgaria’s 19 April parliamentary elections, the information environment has been deliberately shaped to erode trust in democratic institutions through coordinated, high-volume influence operations.

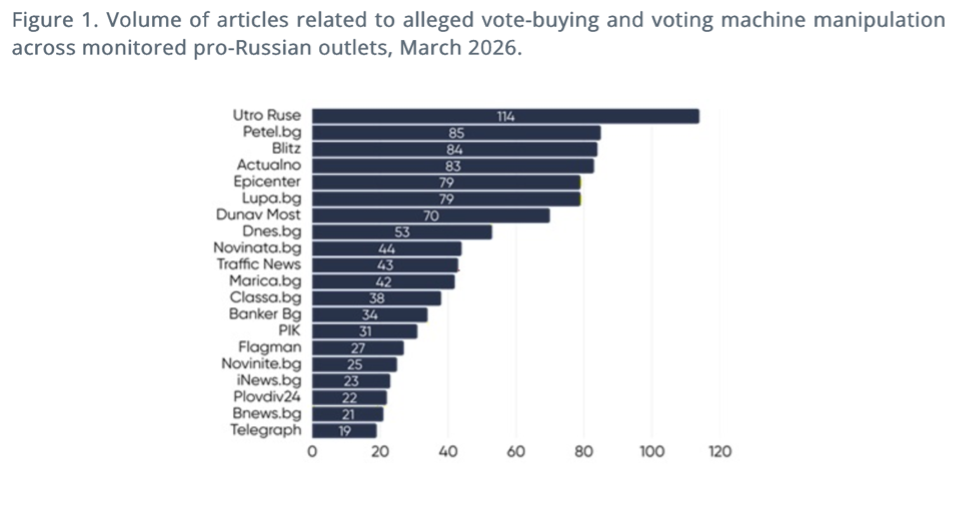

Monitoring conducted in March 2026 identified over 340 million impressions generated across interconnected media and social networks, equating to roughly three exposures per voter per day, signaling a shift toward proactive “cognitive shaping”. With this strategy, the objective is not persuasion but pre-emptive delegitimisation of the electoral process.

Legitimate state actions, including law enforcement against vote-buying and counter-disinformation measures, have been systematically reframed as authoritarian overreach, while synchronized amplification networks—many previously linked to pro-Kremlin ecosystems—have flooded the space with narratives portraying the government as illegitimate and elections as fraudulent.

These operations often converge and intensify around key moments, maximising narrative saturation and limiting institutional response capacity.

Before and After the Hungarian Elections

In its latest digest Lakmusz gives and overview of the before and the after of Hungary’s 12 April parliamentary elections. The monitoring highlighted how disinformation, platform dynamics, and structural electoral features intersected to shape both the campaign and its outcome.

Pre-election analysis found that AI chatbots variably reproduced misleading political claims, particularly those aligned with ruling-party narratives. The broader campaign environment saw AI-driven content, platform ad restrictions, and the entry of Russian disinformation actors collectively distorting the information space.

Despite higher posting volume from Fidesz, opposition party Tisza Party generated significantly stronger engagement and ultimately secured a two-thirds parliamentary majority under Hungary’s majoritarian electoral system, even without majority support among all eligible voters.

Post-election assessments confirmed that while the vote was competitive and orderly, it was conducted on an uneven playing field. This period still saw some AI-generated content, as highlighted in this article by Newtral, where the image featured implies that the defeat of Orban resulted in a surge of illegal immigration.

GLOBAL PULSE

Disinformation narratives shaping the world’s conversations

False Claims Spread Amid Strained Relations Between Pope Leo XIV and President Trump

With disinformation frequently seizing on fastmoving news this article shows how a viral claim twisted a recent Vatican statement to fit an explosive narrative. Posts circulating across multiple platforms alleged that Pope Leo XIV had urged Americans to demand President Donald Trump’s impeachment, supposedly based on an English language interview. But the original Vatican video shows something far more measured: the Pope called on “all people of goodwill” to seek peace, reject war, and respect international law, without mentioning Trump, the United States, or impeachment.

The article places the misunderstanding in a tense political moment, noting that U.S. military actions and President Trump’s criticism of the Pope had already heightened attention to Vatican remarks. Even so, AFP’s review of the full interview, official transcripts, and multilingual searches found no evidence supporting the impeachment claim. The widely shared interpretation was simply incorrect, illustrating along with further coverage by the EDMO network, how quickly disinformation can attach itself to unfolding events.

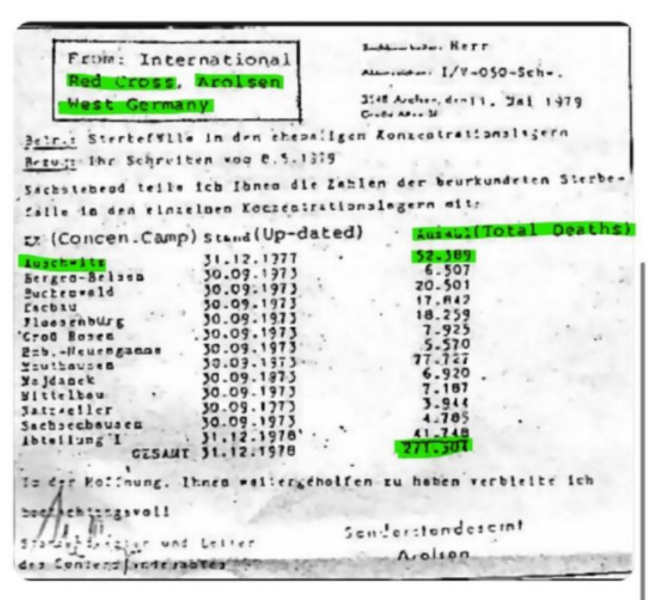

Selective Data at the Heart of a Persistent Holocaust Denial Claim

Holocaust denialism remains a persistent problem on social media, and the article shows how one of its common tactics works: circulating the misleading claim that only 271,000 Jewish people died in the Holocaust. That number is taken from a 1979 Red Cross document that listed only deaths for which formal certificates existed—mainly from a limited set of concentration camps. Because most victims of extermination camps, ghettos, and mass shootings were never officially registered, the figure represents only a tiny fraction of the real death toll.

The fact‑check emphasizes that decades of rigorous research – demographic studies, transport records, survivor testimony, and perpetrator documentation – consistently place the number of Jewish victims between 5.1 and 6 million. No credible historian supports the drastically reduced figure promoted in denialist posts. By explaining how a partial statistic is repurposed to mislead, the article highlights why Holocaust denialism continues to be a serious issue online and why clear, contextualized historical information is essential.

How Anti‑Women Narratives Hijack Feminism

The article situates a viral anti‑feminist video within a broader ecosystem of anti‑women disinformation that thrives on social media platforms known for rapid, low‑context sharing. The video claims that feminism has lowered wages, increased taxes, and ideologically targeted children — narratives that fact‑checkers show are built on distortions rather than evidence. Feminism, as defined by historians and researchers, is a movement for equal rights, not an economic plot. Official Latvian and OECD data contradict the claim that women entering the workforce reduced salaries; wages have risen over time, even after inflation. The idea that feminism “doubled” tax revenues is also misleading, since tax policy is set by governments, not by women’s employment patterns.

The article also highlights how these narratives spread: through short‑form videos, influencer‑style commentary, and algorithm‑boosted posts that reward emotional, polarizing content. Conspiracy‑tinged claims — such as feminism being secretly funded by powerful families or intelligence agencies to influence school curricula — gain traction because they fit the format of shareable, sensational storytelling. In reality, school curricula are shaped by governments and education experts, and historical funding for women’s rights initiatives focused on reducing discrimination and expanding access to education. By unpacking both the false claims and the channels that amplify them, the fact‑check shows how anti‑women disinformation adapts to digital platforms and why media literacy is essential to counter it.

ON A DIFFERENT NOTE

A European ‘CERN for Data and Democracy’ to bring together fragmented capacities in Europe for continued research on platforms at industrial scale is needed. A global innovation race without examining its societal implications may outpace the ability of societies to absorb those changes peacefully.

Joint Research Centre, Fractured reality: How democracy can win the global struggle over the information space

Paolo Cesarini, Editorial Director

Tommaso Canetta, Editor-in Chief

Editorial Staff: Elena Coden, Paula Gori, Elena Maggi

This edition draws in part on automated translation and reflects information available as of 22 April 2026. Later developments may not be included.