IN THIS EDITION

ON THE RISE

Weekly watch of emerging disinformation risks

Orbán has been defeated, but what comes next?

The reasons for Viktor Orbán’s defeat are likely multiple and intertwined. The Hungarian economy performed poorly in the last few years, and the geopolitical situation turned against Orbán: the war against Iran waged by Trump and Netanyahu, two of his political allies, crippled the Hungarian prime minister’s claim that he is the candidate for peace, while choosing the opposition would have meant war (against Russia). Corruption scandals also piled up.

An important role was also played by the fight against the manipulation of the information space carried out by domestic and foreign actors in Hungary. The Hungarian and European community that counters disinformation managed to pre-empt many disinformation attacks, debunking and prebunking false flag operations, uncovering cases of foreign interference and networks of fake accounts spreading propaganda, verifying AI-generated content and, in general, spreading awareness among the public about different attempts to poison the wells of information.

If it is true that disinformation came from more than just one side, no false equivalence should be made: the pro-Orbán forces bear responsibility for the vast majority of the disinformation and manipulation circulating in Hungary before the vote. And their defeat in the April 12 vote should not be mistaken for the end of the issue of disinformation targeting Hungary. The long-term damages of years of disinformation campaigns are here to stay in the near future, and the capture of media and institutions by Fidesz will not be reversed in a short period of time. Foreign interference as well is not likely to stop after the elections. On the contrary: looking at the pieces on the chessboard, it is possible to foresee what will likely happen next.

Fidesz and the other groups supporting Orbán claimed in the last weeks that the EU (and Ukraine) interfered in the Hungarian elections. J.D. Vance, the U.S. vice president, while in Budapest to openly support Orbán on behalf of Trump’s administration, said that the EU in Hungary was responsible for “one of the worst examples of election interference” he had ever seen (the irony of the statement is clear).

The U.S. Judiciary Committee, chaired by Jim Jordan, published a letter the day before the vote to EU Commissioner Henna Virkkunen, claiming that the Rapid Response System of the EU Code of Practice on Disinformation is a tool for censoring pro-Orbán voices before the vote (it is actually a mechanism that allows civil society organizations to bring to the attention of major online platforms content or accounts that threaten the integrity of elections: the decisions are always made by the platforms based on their own rules and procedures, and they bear sole responsibility for them).

It is therefore not unlikely that in one of the next reports by the U.S. Judiciary Committee there will be allegations that the EU conspired to make Orbán lose (it is worth noting that in the past similar allegations have been proved to be unfunded). Then disinformation attacks against the new Hungarian government, questioning its legitimacy, could start, feeding a narrative about “stolen elections”, like the one that circulated in the United States after the 2020 vote, paving the way for Trump’s comeback in 2024.

It is possible that the scale of Orbán’s defeat is such as to discourage similar attempts, or to drastically undermine their chances of success, but one lesson learned from the Hungarian elections is that it is important to anticipate probable disinformation and propaganda campaigns.

ZOOM-IN

A closer look at cases detected by the EDMO Network

Foreign and domestic actors have been trying to influence swing voters in Hungary until the very last day of the electoral campaign, exploiting well known tactics and techniques to control the public debate on social media. These included an unprecedented use of AI for political communications, coordinated inauthentic behaviour across different platforms, untransparent political ads, and more. Organisations within the EDMO Network have doubled down their efforts to identify and document specific cases of information manipulation in real time, in a bid to strengthen voters’ awareness about impending threats to electoral integrity and boost resilience against influence operations coming from different fronts. The following selection of articles provides concrete examples of detected disinformation dynamics during the last phase of the electoral campaign.

Coordinated Networks and Political Ads Circumvent Content Moderation on TikTok and Facebook

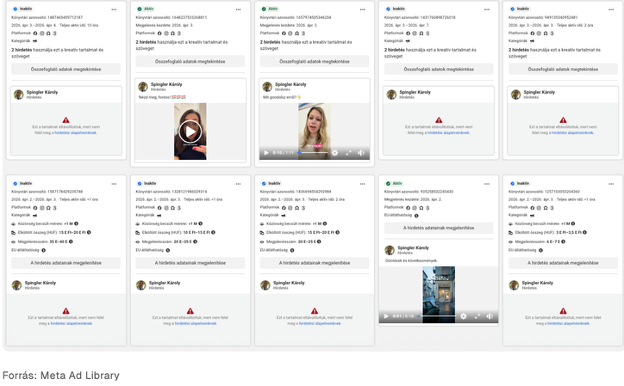

An investigation conducted by Lakmusz reveals that a newly created Facebook profile—using the image of a Russian man—spent large sums promoting pro-government content and anti-Tisza Party AI-generated videos during Hungary’s 2026 election campaign. The profile, registered on March 30, quickly became highly active, running dozens of advertisements despite Meta’s formal ban on political ads. In just a few days, the account published over 30 ads and spent at least 1.9 million forints, reaching nearly 7 million users.

Many of the promoted videos echoed government messaging, particularly claims that opposition energy policies would harm Hungarians. Although Meta removed a significant portion of the ads for violating its rules, enforcement was inconsistent: some ads remained active long enough to achieve substantial reach. Overall, the article highlights how easily political actors or proxies can bypass platform restrictions using fake profiles, paid promotion, and AI-generated content—raising concerns about transparency, accountability, and the effectiveness of platform moderation during elections.

This investigation follows a previous analysis by Lakmusz, which unveiled a coordinated network spreading pro-government campaign content on TikTok using shocking or emotionally manipulative AI-generated videos. Although TikTok has repeatedly removed many of these accounts for coordinated or inauthentic behaviour, the network quickly regenerated: new profiles continuously replaced those that were deleted, allowing the campaign to persist. Based on its methods, the network showed similarities to previously identified influence operations linked to Russian-style disinformation campaigns.

Synthetic Influence – Deepfakes and Artificial Intelligence in the Hungarian Election Campaign

A report by Political Capital examines how AI-driven content has been shaping political communication in Hungary’s 2026 election period. It focuses particularly on the rise of synthetic media, such as deepfake videos and AI-generated images, and their implications for the integrity of public debate. Overall, the report argues that AI-driven “synthetic influence” represents a growing risk to electoral integrity. Rather than relying solely on traditional disinformation, political actors can now deploy highly scalable, emotionally compelling, and difficult-to-detect content.

The analysis finds that AI tools were being widely used to produce persuasive political content, especially by actors linked to the governing party and its affiliated networks. These materials were often designed to evoke strong emotional reactions—such as fear, anger, or empathy—which can significantly influence public opinion even when audiences are aware that the content may be manipulated. Importantly, the emotional impact of such content can persist even after it is debunked. The report highlights that AI-generated imagery has become especially prominent on Facebook pages associated with pro-government media. While independent content creators and opposition actors also experimented with such tools, their reach and impact appear more limited. Among opposition groups, only a few actors were actively using AI-generated video content at a comparable level.

A key concern raised is that synthetic media can circumvent platform regulations, including rules governing political advertising, raising significant challenges for platforms attempting to enforce transparency and fairness in political campaigning. The study calls for stronger regulatory responses, improved platform accountability, and greater public awareness to mitigate the impact of these technologies on democratic discourse.

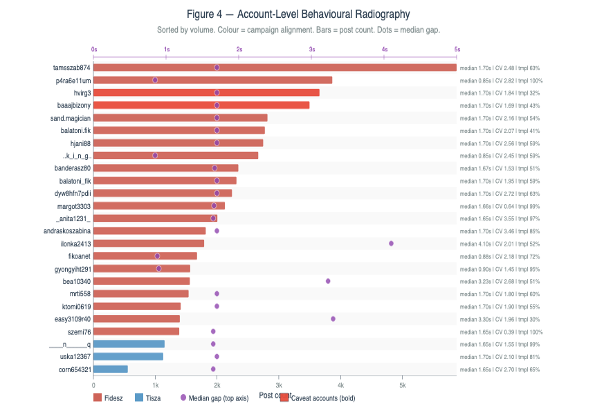

Coordinated Inauthentic Behaviour – Automation Suffocates Information Spaces during the Hungarian Electoral Campaign

The article focuses on 19 TikTok live sessions hosted by Prime Minister Viktor Orbán between 18–29 March 2026. Researchers collected over 257,000 comments from more than 12,500 users and found clear signs of systematic, non-organic behaviour. Large volumes of identical or near-identical comments were posted repeatedly within short timeframes, indicating the use of automation or coordinated scripts rather than spontaneous user participation. Rather than aiming to persuade audiences, these campaigns were intended to flood the information space and displace discussion. By saturating comment sections with repetitive messages, they effectively reduced the visibility of authentic political discourse, while creating an artificial sense of consensus or popularity.

A key point concerns the responsibility of platforms like TikTok under the EU’s Digital Services Act (DSA). As a designated Very Large Online Platform, TikTok is required to assess and mitigate systemic risks to electoral integrity. However, the scale and persistence of the detected activity suggest that these obligations were not adequately fulfilled, since the manipulation continued unchecked during a critical pre-election period.

ELECTION BEAT

Tracking electoral disinformation through EDMO Hubs

Defending the Vote: Narrative Pressure and Infrastructure Risks Update

This report looks at information manipulation risks and disinformation trends observed in February–March 2026 in the run-up to the Bulgarian elections.

It traces how pro-Kremlin narratives enter the information ecosystem, moving from fringe outlets into mainstream discourse and then being amplified across platforms. Key findings include the rapid activation of the Pravda network following the seizure of Ukrainian assets in Hungary, the continued republication of content from EU-sanctioned Russian sources, and the central role of Telegram as a high-reach distribution layer. Notably, 51% of Pogled Info’s reposted videos accounted for nearly 88% of its total monthly reach. The “Petrohan” case further illustrates how narratives are laundered from fringe media into parliamentary debate.

The report also highlights key structural vulnerabilities, including rapid content-flooding capacity, persistent gaps in sanctions enforcement, and limited monitoring of TikTok and other short-form video platforms.

Inside the Facebook Feed Before Bulgaria’s 2026 Election: Narrative and Manipulation Pattern Mapping

This second edition of the Narrative Atlas, a weekly analytical update tracking disinformation ahead of the Bulgarian parliamentary elections, focuses on the period 30 March–5 April.

It identifies “Geopolitical Existentialism & ‘National Treason’” as the dominant narrative, triggered by the signing of a 10-year security agreement with Ukraine. This is followed by narratives centred on institutional delegitimisation – “Institutional Sabotage & the ‘Censorship Padlock’” and “The ‘Captured State’ & Judicial Sabotage” as well as themes of social division and “Spiritualised Despair”, which frame political instability through non-rational interpretations.

The report further outlines how these narratives spread through a coordinated ecosystem combining high-reach influencers, algorithmic amplification, and the exploitation of informational gaps, and concludes with a comparison to trends identified in the previous week.

Facebook Network Raises False Claims of Elections Fraud in Germany – Trail Leads to Vietnam

As political unease is mounting in Germany ahead of the Eastern Länder elections scheduled for this autumn, where anti-democratic forces could, for the first time, secure control of the government, the recent highly contentious state elections in Baden-Württemberg and Rhineland-Palatinate offered an early indication of the role disinformation is likely to play in the coming months.

This investigation uncovers a coordinated network of Facebook pages that disseminated false allegations of electoral fraud following Germany’s March 2026 state elections. By pushing fabricated narratives tailored to specific regions, the network aimed to erode public confidence in the integrity of the democratic process.

The disinformation campaign operates through interconnected Facebook pages masquerading as legitimate news outlets, directing users to external websites that publish sensationalist and wholly fabricated articles.

These stories frequently and falsely attribute claims of election fraud to public figures or political actors, lending them an air of credibility. The investigation identifies consistent patterns suggesting that the network is internationally operated, with multiple indicators pointing to Vietnam. The associated websites appear to form part of a broader content‑farming operation, designed primarily to generate advertising revenue through high‑engagement, misleading material rather than to advance a coherent ideological or political agenda.

GLOBAL PULSE

Disinformation narratives shaping the world’s conversations

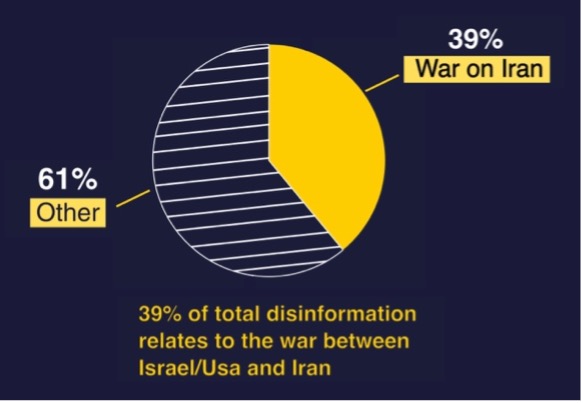

Disinformation About the Iran War: One of the Largest Disinformation Phenomena Ever Detected in the EU

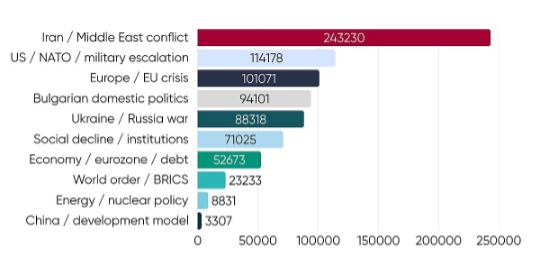

The latest Monthly Brief, compiled with data from the EDMO Fact-Checking Network, highlights a sharp rise in disinformation related to the conflict between the US, Israel and Iran, which dominated false content trends in March.

Following the 28 February joint strike by the US and Israel on Iran, social media across Europe saw a surge in misleading narratives about the conflict. This spike, likely driven by both ideological positioning and click-driven monetization, also renewed attention on the crisis in Gaza, contributing to a four-percentage-point increase in related disinformation.

As observed during the June 2025 escalation between Israel and Iran, artificial intelligence tools were widely used to generate and amplify false or exaggerated claims about attacks and retaliations. In this context, several EDMO fact-checking organisations also flagged the unreliability of AI chatbots as verification tools, with particular concerns raised about Grok.

This further fueled the spread of misleading images and videos, a common trend in moments of crisis. Old, unrelated photos claimed to show destruction in Tel Aviv, Dubai and Ryadh, and a misleading video circulated the news that the house of Israeli Prime Minister Benjamin Nethanyahu had been hit by a strike, fueling the conspiracy theory that he had been killed.

Russia’s tale of the Baltics opening the skies to Ukrainian drones: “Disinformation as usual” or the harbingers of Russian military aggression against NATO’s Eastern flank?

When several Ukrainian drones crashed in the Baltics in March, Russian propaganda swiftly pushed a story that Latvia, Lithuania and Estonia had deliberately “opened” their airspace to enable Kiyv to attack Russia. All three countries deny the claim, with Latvia formally accusing Moscow of a disinformation campaign and demanding a retraction.

Re:Baltica, member of EDMO’s Hub BECID, reconstructs how the story spread – and why it carries the fingerprints of coordinated state propaganda. Across the Atlantic, Moscow’s threats and “last warning” raise alarm, with some US experts warning that in addition to Russia’s ongoing war of aggression against Ukraine, “the Kremlin may be setting conditions to use these allegations as the basis for military action in the airspace over one or more of the Baltic states”.

Just like during Covid, conspiracy theorists are thriving amid the Middle East fuel crisis

The Iran war continues to provide fertile ground for disinformation that exaggerates government intentions and stokes public anxiety: conspiracy theorists are exploiting the current interruptions to global oil flows to resurrect fear based narratives familiar from the Covid 19 era. Online networks are framing fuel saving advice and contingency planning as evidence of looming “energy lockdowns” and state control, despite these measures being responses to genuine supply disruptions and price spikes.

Aidan O’Brien of EDMO Ireland spoke with The Journal about the risk such disinformation poses to social cohesion at a time when collective action may be needed. O’Brien notes that conspiracy groups are now more organised and amplified by social media platforms that increasingly reward viral content, making the spread of fear faster and harder to contain than during the pandemic.

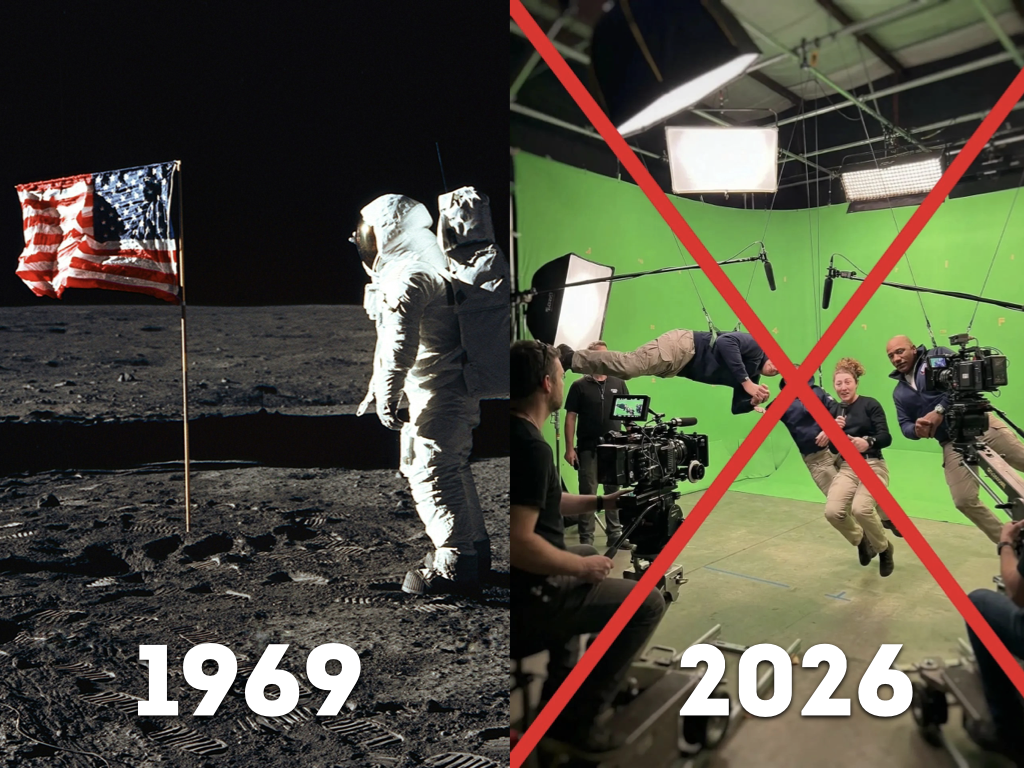

Back to the Moon, Back to the Myths: As Artemis II Captures Global Attention, Long-Dormant Conspiracy Narratives Re-Emerge

This month’s Artemis II mission – remarkable for the scientific findings and widespread excitement it has generated about the Moon and deep space – has also yielded a more terrestrial insight: while no new life has been encountered beyond Earth yet, familiar disinformation narratives have found new life of their own, resurfacing with striking ease after extended periods of relative quiescence.

Several members of the EDMO network have encountered and debunked circulating online content claiming the NASA-led (and ESA-supported) mission is a hoax, echoing classic conspiracies around the 1969 moon landing. Another category of viral content doesn’t question the mission per se; instead, it shows either particularly breathtaking but fake imagery, or supposed unexplained, mysterious phenomena on the Moon that are actually AI-generated videos – all for monetisation purposes. In this article, IBERIFIER member Verifica dismantles one of the more sophisticated cases.

ON A DIFFERENT NOTE

The European public is divided on the issue of Chat Control 2.0: while the majority expects increased safety, a portion of the population expresses concerns about threats to freedom of speech or invasions of privacy. At the same time, people’s willingness to change their online behavior is growing

CEDMO, CEDMO Survey

Paolo Cesarini, Editorial Director

Tommaso Canetta, Editor-in Chief

Editorial Staff: Elena Coden, Paula Gori, Elena Maggi

This edition draws in part on automated translation and reflects information available as of 15 April 2026. Later developments may not be included.