Hungary’s 2026 Election: AI-Driven Post-Reality Campaigning and Its Limits

The views expressed in this publication are those of the author and do not necessarily reflect the official stance of the European Digital Media Observatory. This text has been published as part of the first edition of the new monthly EDMO Signals & Noise newsletter. Sign up here to receive future editions directly to your inbox.

Authors: Péter Krekó, Richárd Demény, Csaba Molnár, Adél Tilistyák, Konrad Bleyer-Simon

Fidesz’s extensive use of AI-generated content in the 2026 parliamentary election campaign marked a turning point in campaign strategy, as AI-driven manipulation and disinformation reached an unprecedented level in the government’s cognitive warfare against its citizens. Although Fidesz lost the election, similar tactics will be used in the future as well to flood the information space with emotionally charged AI-generated content.

Fidesz has been a trendsetter in developing methods, manipulations, and communication tactics in campaigns to secure parliamentary election victories. Our study showed that the 2026 elections once again served as a laboratory for election manipulation, setting new precedents and revealing techniques that could be replicated by illiberal leaders in other countries. Fidesz’s trendsetter role might decline in the future, but deepfakes in the campaigns definitely won’t disappear.

AI-driven manipulation and electoral disinformation are increasingly widespread in electoral politics. During the 2024 U.S. presidential election campaign, AI-generated images and deepfake videos were used to promote and discredit political actors. Ahead of the 2025 elections in Germany, the Alternative for Germany (AfD) used AI-generated content to reinforce its anti-immigration narratives, while Russian-backed deepfakes targeted political opponents. In the 2025 Irish presidential election campaign, a widespread deepfake video disrupted the campaign in which one of the candidates, Catherine Connolly, announced her withdrawal. While she won the election, the video caused significant confusion. In the last Slovakian parliamentary elections, audio deepfakes might have had an impact on shifting voters to vote for the Smer party.

Against this broader international backdrop, Fidesz recognized the potential of AI-driven disinformation and exploited it to a degree that no other EU country has yet experienced in its 2026 election campaign.

While the party previously flooded social media with political ads, the party had to adapt to the social media ban by resorting to manipulative, norm-breaking tactics, including content flooding and organic and synthetic amplification. One tactic stood apart from the rest: the strategic use of emotionally charged AI-generated content.

Fidesz heavily relied on disseminating AI-generated content because it simultaneously offers psychological advantages, spreads easily on social media, and converts political messaging into simplified visual forms.

A public opinion survey conducted by Political Capital Institute, between 23 and 26 March 2026 used telephone interviews with a representative sample of 1,000 individuals aged 18 and older in Hungary to measure the impact of AI-generated content in the campaign.

AI-generated videos and images transform political messages into highly persuasive and emotionally powerful visual narratives. People tend to trust visual and audiovisual content more than written words because they believe what they see with their own eyes. Such content often evokes strong emotions, such as fear, anger, empathy, or moral outrage, which reduces critical thinking and increases the likelihood of sharing. These vivid representations have a long-lasting impact since they shape public beliefs and perception even when they are exposed as false.

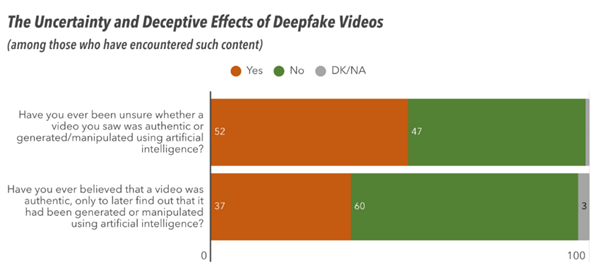

Our public opinion survey found that AI-generated videos had contributed to substantial levels of uncertainty. Over half of the people who had encountered deepfake videos (52%) reported being unsure whether a video was authentic or AI-generated. Furthermore, over one-third (37%) had been misled into believing manipulated content was real at some point. At the same time, respondents tended to overestimate their own ability to detect such content, while expressing low confidence in others’ ability to do so

In addition to these psychological advantages, their effectiveness is reinforced by their potential to become viral. AI-generated content often achieves greater reach than traditional political material, especially when amplified by paid advertising. This is possible because platforms’ filtering systems are not always capable of detecting the political nature of such content, allowing it to reach a wide audience despite existing restrictions. Even when such political ads were removed, advertisers relaunched them immediately. Sometimes, these ads remained active for several hours before being taken down again, with this cycle repeated multiple times. Our public opinion survey confirmed the widespread reach of AI-generated content, as the vast majority of respondents (73%) reported encountering it on social media, with exposure varying significantly by political affiliation, age, and education.

Fidesz ran its campaign almost exclusively on disinformation narratives about Ukraine, the EU, and the opposition TISZA Party, using AI-generated videos and images to make its political messages appear more credible and realistic. The party sought to generate war psychosis and exacerbate anxiety by using AI-generated visuals depicting the horrors of war, reaching unprecedented levels of scaremongering. Others sought to make the alleged dangers posed by Ukraine and a TISZA government appear more real. AI-generated versions of Péter Magyar were shown as puppets of President Volodymyr Zelenskyy and European Commission President Ursula von der Leyen, serving their interests. AI-generated videos also spread disinformation about TISZA’s alleged austerity plans, which a court ruled were false. An AI-written „Tisza program” was circulated by pro-governmental media with absurd „promises” such as extra taxes on pets. Despite a court ruling, a tabloid „newspaper” on these promises was sent to every household.

The significant proliferation of AI-generated content will continue to influence Hungarian politics. Hungary lacks a comprehensive framework governing the use of AI for political communication, treating AI as a tool and placing responsibility on content creators and disseminators. The EU’s AI Act gradually introduces stricter measures for high-risk AI systems, including those intended to influence the outcome of an election or voting behavior. However, these high-risk AI systems will not be banned.

Lack of success- possible reasons.

Despite Fidesz’s extensive use of AI-generated content for political communication, AI-driven manipulation and disinformation were only partially successful in swaying public opinion during the campaign. Why was A key contributing factor is that the Hungarian public rejects the use of AI-generated or manipulated content. Our public opinion survey found that 90% of respondents considered the use of such tools in politics to be entirely unacceptable, while only 3% found them somewhat acceptable. Consequently, although the use of AI-generated content is becoming more prevalent, it is not widely accepted in society.

But of course, additional factors were also at play, closely tied to the broader political environment. For the first time, Viktor Orbán faced a genuine challenger – one capable of “fact-checking” and directly contesting his disinformation narratives, and who, crucially, enjoyed higher levels of trust among voters than the incumbent prime minister.

The traditionally dark, dystopian tone of government campaigns – centered almost exclusively on geopolitical threats from Ukraine and Brussels – was countered by a more hopeful and optimistic campaign led by Tisza and Péter Magyar. Their messaging shifted the focus back to domestic issues that voters care most about, such as education, healthcare, and corruption.

At the same time, there are signs that voters have developed a degree of fatigue toward government disinformation, as reflected in our polling data before the elections. Preemptive communication – warning citizens in advance about expected manipulation attempts – also played an important role in neutralizing disinformation before it could spread, particularly when it comes to Russian FIMI, interference attempts and Russian disinformation spread by political actors. Finally, the independent – predominantly online – media has managed to build a substantial audience in recent years, despite sustained governmental pressure. Investigative reporting and coverage exposing patterns of abuse of power have, once again, effectively challenged official narratives and, in many cases, rendered them increasingly irrelevant.

Our recommendations emphasize coordinated response to the risks posed by AI-generated content.

- Realistically, we need to adapt to an environment in which the use of generative AI is becoming an everyday tool of political communication. Not all AI-generated content should be treated with the same level of scrutiny when it comes to regulation and content moderation. The intent to deceive appears to be a crucial factor – even if attributing intent is not always simple. It is therefore important to distinguish between the more innocuous, everyday use of AI in political campaigning – where the true/false dichotomy and deceptive intent is less central – and more harmful forms of disinformation discussed above, such as deepfakes targeting political opponents without their consent, with the aim of directly misleading the electorate about their statements, goals and political manifesto.

- Our findings show that the majority of society opposes the use of deepfakes in political campaigns – a pattern that is likely to extend to other countries as well. This normative stance can be leveraged through advocacy efforts. For example, in Italy, Facta proposed a code of conduct on the use of deepfakes in political campaigns, which was ultimately signed by the political parties themselves.

- Social media, microblogging, streaming, and search platforms should systematically monitor content published by the largest political actors, including candidates, political parties, activists, and influencers, and ensure that any AI-generated or manipulated material is clearly labeled. Unlabeled deepfake content should also be excluded from monetization and advertising services. This is in line with the principles of the Code of Practice on marking and labelling of AI-generated content, which is currently being drafted in the European Commission.

- Content creators, including political figures at the national level, should always label AI-generated content and ensure that shared content is properly marked.

- A legally mandated transparency and labeling framework for AI-generated content, can be modeled on Denmark’s approach. The Danish regulation requires clear, standardized disclosure when content is fully or partially generated by artificial intelligence. It requires that AI-generated content depicting identifiable individuals may only be created or disseminated with the explicit, informed consent, and should be removed in absence of such content.

- All generative AI platforms and providers and deployers of AI services should support the development of forensic detectors to identify AI-generated content.

- EU institutions should be vigilant about the systemic risks posed by unlabeled AI-generated content. They should also consider introducing stricter labeling obligations for technology services that produce, deploy, or distribute such content.